Amazon EKS EKS(Elastic Kubernetes Service) 완전 관리형 k8s 제어 플레인

AWS 의 각종 서비스들 [VPC, ELB, IAM, EC2] 들과 EKS 를 같이 사용하는 방법을 알아본다.

맨 아래 데모코드 참고

CDK Cluster 생성 AWS EKS 리소스는 CDK 로 생성할 예정

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 26 27 28 29 30 31 32 33 34 35 36 37 38 39 40 41 42 43 44 45 46 47 48 49 50 51 52 53 54 55 56 57 58 59 60 61 62 63 64 65 66 public class EckDemoApp { public static void main (final String[] args) { App app = new App (); String accountId = "..." ; StackProps props = StackProps.builder() .env(Environment.builder() .account(accountId) .region("ap-northeast-2" ) .build()) .build(); EckDemoVpc eckDemoVpc = new EckDemoVpc (app, "EckDemoVpc" , props); EckDemoCluster eckDemoCluster = new EckDemoCluster (app, "EckDemoCluster" , props, eckDemoVpc.vpc); EckDemoDatabase eckDemoDatabase = new EckDemoDatabase (app, "EckDemoDatabase" , props, eckDemoVpc.vpc); EckDemoWebService eckDemoWebService = new EckDemoWebService (app, "EckDemoWebService" , props, eckDemoVpc.vpc); app.synth(); } } public class EckDemoVpc extends Stack { public Vpc vpc; public EckDemoVpc (final Construct scope, final String id, final StackProps props) { super (scope, id, props); vpc = Vpc.Builder.create(this , "eks-work-VPC" ) .vpcName("eks-work-VPC" ) .maxAzs(3 ) .cidr("10.0.0.0/16" ) .enableDnsSupport(true ) .enableDnsHostnames(true ) .build(); } } public class EckDemoCluster extends Stack { public EckDemoCluster (final Construct scope, final String id, final StackProps props, Vpc vpc) { super (scope, id, props); IManagedPolicy policy = ManagedPolicy.fromAwsManagedPolicyName("AmazonEKSClusterPolicy" ); Role eksClusterRole = Role.Builder.create(this , "eks-work-control-plane-role" ) .roleName("eks-work-control-plane-role" ) .assumedBy(ServicePrincipal.Builder.create("eks.amazonaws.com" ).build()) .managedPolicies(Collections.singletonList(policy)) .build(); Role clusterAdmin = Role.Builder.create(this , "eks-work-kubectl-role" ) .roleName("eks-work-kubectl-role" ) .assumedBy(new AccountRootPrincipal ()) .build(); Cluster eksCluster = Cluster.Builder.create(this , "eks-work-cluster" ) .vpc(vpc) .clusterName("eks-work-cluster" ) .version(KubernetesVersion.V1_26) .defaultCapacity(2 ) .defaultCapacityInstance(InstanceType.of(InstanceClass.T2, InstanceSize.SMALL)) .defaultCapacityType(DefaultCapacityType.NODEGROUP) .role(eksClusterRole) .mastersRole(clusterAdmin) .build(); eksCluster.getDefaultNodegroup().getRole() .addManagedPolicy(ManagedPolicy.fromAwsManagedPolicyName("CloudWatchAgentServerPolicy" )); } }

아래와 같이 배포 후 EKS 클러스터에 접근 설정

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 cdk bootstrap cdk synth cdk deploy EckDemoVpc cdk deploy EckDemoCluster aws eks list-clusters # 본인 계정에 맞는 accountId 설정 export ACCOUNT_ID=$(aws sts get-caller-identity --query "Account" --output text) # $HOME /.kube/config 업데이트aws eks --region ap-northeast-2 update-kubeconfig \ --name eks-work-cluster \ --role-arn arn:aws:iam::$ACCOUNT_ID\:role/eks-work-kubectl-role # ~/.kube/config 에 context 확인 kubectl config get-contexts kubectl get nodes

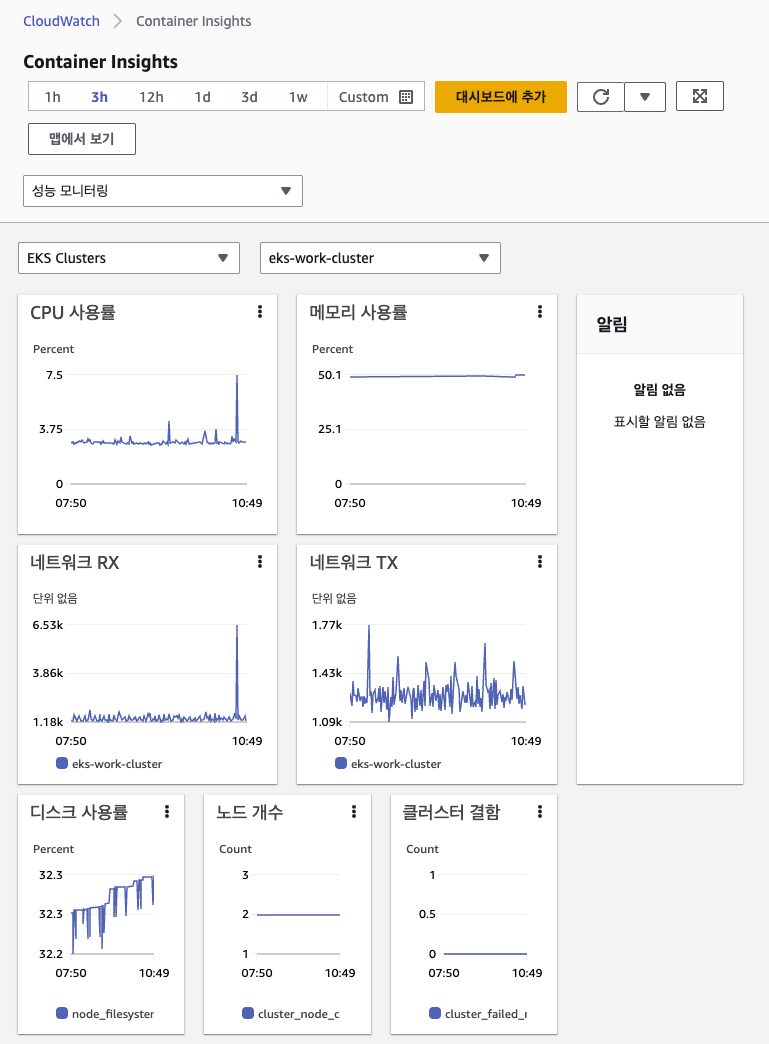

모니터링

https://docs.aws.amazon.com/ko_kr/AmazonCloudWatch/latest/monitoring/Container-Insights-setup-metrics.html

아래 리소스들 설치

namespace service-account[custlerRole] configmap daemonset

1 2 3 4 5 6 7 8 9 10 11 12 13 # /cloudwatch-yaml 디렉토리 curl -O https://raw.githubusercontent.com/aws-samples/amazon-cloudwatch-container-insights/latest/k8s-deployment-manifest-templates/deployment-mode/daemonset/container-insights-monitoring/cloudwatch-namespace.yaml curl -O https://raw.githubusercontent.com/aws-samples/amazon-cloudwatch-container-insights/latest/k8s-deployment-manifest-templates/deployment-mode/daemonset/container-insights-monitoring/cwagent/cwagent-serviceaccount.yaml curl -O https://raw.githubusercontent.com/aws-samples/amazon-cloudwatch-container-insights/latest/k8s-deployment-manifest-templates/deployment-mode/daemonset/container-insights-monitoring/cwagent/cwagent-configmap.yaml curl -O https://raw.githubusercontent.com/aws-samples/amazon-cloudwatch-container-insights/latest/k8s-deployment-manifest-templates/deployment-mode/daemonset/container-insights-monitoring/cwagent/cwagent-daemonset.yaml kubectl apply -f kubectl get all -n amazon-cloudwatch

모든 리소스를 확인하면 daemonset 에 의해 node 개수만큼 pod 실행되고 있는것을 알 수 있다.

1 2 3 4 5 6 NAME READY STATUS RESTARTS AGE pod/cloudwatch-agent-4f6kv 1/1 Running 0 19h pod/cloudwatch-agent-7qqjv 1/1 Running 0 19h NAME DESIRED CURRENT READY UP-TO-DATE AVAILABLE NODE SELECTOR AGE daemonset.apps/cloudwatch-agent 2 2 2 2 2 kubernetes.io/os=linux 19h

몇시간 지나면 아래와 같은 사진을 확인할 수 있다.

EBS StorageClass Stateful 서비스를 만드려면 PVC 와 StorageClass 를 사용하는것이 일반적.StoreClass 는 AWS EBS 혹은 AWS EFS 로 사용하는 경우가 많다.

EBS CSI 드라이버 설치 EKS 1.23 버전 이후부터 바로 StoreClass 에 AWS EBS 를 지정해서 사용할수 없고 EBS CSI(Container Storage Interface) 드라이버 를 통해서만 AWS EBS 접근이 가능하다.

https://docs.aws.amazon.com/ko_kr/eks/latest/userguide/ebs-csi.html

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 26 27 28 29 30 31 32 # custer 이름 지정 export CLUSTER_NAME=eks-work-cluster export ACCOUNT_ID=$(aws sts get-caller-identity --query "Account" --output text) # Amazon EBS CSI 플러그인 위한 role 추가 eksctl create iamserviceaccount \ --name ebs-csi-controller-sa \ --namespace kube-system \ --cluster ${CLUSTER_NAME} \ --role-name AmazonEKS_EBS_CSI_DriverRole \ --role-only \ --attach-policy-arn arn:aws:iam::aws:policy/service-role/AmazonEBSCSIDriverPolicy \ --approve # Amazon EBS CSI 플러그 에드온 확인(버전) aws eks describe-addon-versions --addon-name aws-ebs-csi-driver # 드라이버 설치 eksctl create addon --name aws-ebs-csi-driver \ --cluster ${CLUSTER_NAME} \ --service-account-role-arn arn:aws:iam::${ACCOUNT_ID}:role/AmazonEKS_EBS_CSI_DriverRole --force # 설치된 드라이버 버전 확인 eksctl get addon --name aws-ebs-csi-driver --cluster ${CLUSTER_NAME} # 드라이버 업데이트 명령어 eksctl update addon --name aws-ebs-csi-driver \ --version ${EBS_DRIVER_UPDATE_VERSION} \ --cluster ${CLUSTER_NAME} --force # 드라이버 제거 eksctl delete addon --cluster ${CLUSTER_NAME} --name aws-ebs-csi-driver --preserve

서비스 배포 Spring Boot 를 기반으로 배포하는 방법을 알아본다.

Spring Boot 서비스는 아래와 같이 spring k8s dependency 를 사용하는 서비스인지 아닌지 2가지로 나눌 수 있다.

1 2 3 4 5 dependencies { implementation 'org.springframework.cloud:spring-cloud-starter-kubernetes-client-config' implementation 'org.springframework.cloud:spring-cloud-starter-kubernetes-client' implementation 'org.springframework.cloud:spring-cloud-starter-loadbalancer' }

만약 사용한다면 k8s resource 에 접근할 수 있는 k8s Role 이 필요하다.

아래 데모 코드를 보면 알겠지만 [clac, greet] 는 spring k8s dependency 를 사용하는 서비스들이다.

아래 명령어로 사전에 Role 생성 및 바인딩

1 kubectl apply -f k8s/eks/rbac.yaml

clac, greet 서비스 배포 [clac, greet] 서비스는 DB 사용하지않는 단순 테스트용 서비스이다.

아래 과정을 거친다.

AWS ECR Repository 생성

Docker Image build & push

k8s deployment 배포

AWS ECR Repository 생성

ECR Repo 는 CDK 를 통해 생성한다.

1 2 3 4 5 6 7 8 9 10 11 public class EckDemoWebService extends Stack { public EckDemoWebService (final Construct scope, final String id, final StackProps props, Vpc vpc) { super (scope, id, props); Repository.Builder.create(this , "calculating-repo" ) .repositoryName("calculating-repo" ) .build(); Repository.Builder.create(this , "greeting-repo" ) .repositoryName("greeting-repo" ) .build(); } }

Docker Image build & push

아래와 같이 [build, tagging, push] 과정을 거친다.

1 2 3 4 5 6 7 8 9 10 11 # api/calculating 디렉토리 gradle build docker build -t calculating-repo:1.0.0 . docker tag calculating-repo:1.0.0 $ACCOUNT_ID.dkr.ecr.ap-northeast-2.amazonaws.com/calculating-repo:1.0.0 docker push $ACCOUNT_ID.dkr.ecr.ap-northeast-2.amazonaws.com/calculating-repo:1.0.0 # api/greeting 디렉토리 gradle build docker build -t greeting-repo:1.0.0 . docker tag greeting-repo:1.0.0 $ACCOUNT_ID.dkr.ecr.ap-northeast-2.amazonaws.com/greeting-repo:1.0.0 docker push $ACCOUNT_ID.dkr.ecr.ap-northeast-2.amazonaws.com/greeting-repo:1.0.0

k8s configmap, deployment 배포

아래와 같이 envsubst 명령어를 사용하면 문서에 매개변수 형식으로 값을 전달 가능

1 2 3 4 5 6 7 8 9 kubectl apply -f k8s/eks/config.yaml ECR_REGION_URL=$ACCOUNT_ID.dkr.ecr.ap-northeast-2.amazonaws.com/greeting-repo:1.0.0 \ envsubst < k8s/eks/greet-deployment.yaml | \ kubectl apply -f - ECR_REGION_URL=$ACCOUNT_ID.dkr.ecr.ap-northeast-2.amazonaws.com/calculating-repo:1.0.0 \ envsubst < k8s/eks/calc-deployment.yaml | \ kubectl apply -f -

calc-deployment.yaml 파일만 살펴보면 아래와 같이 [Deployment, Service(LoadBalancer)] 로 구성되어 있다.

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 26 27 28 29 30 31 32 33 34 35 36 37 38 39 40 41 42 43 44 45 46 47 48 apiVersion: apps/v1 kind: Deployment metadata: namespace: spring name: calc-deployment labels: app: calc-deployment spec: replicas: 1 selector: matchLabels: app: calc-deployment template: metadata: labels: app: calc-deployment spec: containers: - name: calc-deployment image: ${ECR_REGION_URL} imagePullPolicy: Always livenessProbe: httpGet: path: /actuator/health/liveness port: 8080 initialDelaySeconds: 5 periodSeconds: 5 readinessProbe: httpGet: path: /actuator/health/readiness port: 8080 initialDelaySeconds: 5 periodSeconds: 5 ports: - containerPort: 8080 apiVersion: v1 kind: Service metadata: name: calc-service spec: type: LoadBalancer selector: app: calc-deployment ports: - protocol: TCP port: 8080 targetPort: 8080

실행 후 아래 명령어로 LoadBalancer CLUSTER-IP 확인AWS Console EC2-로드 밸런서 탭에서도 Classic Load Balancer 가 생성된 것 확인

1 2 3 4 5 6 7 8 kubectl get all NAME READY STATUS RESTARTS AGE pod/calc-deployment-5567f6f69f-6s4fp 1/1 Running 0 9h pod/greet-deployment-69b5fff8df-vt7mg 1/1 Running 1 (9h ago) 9h NAME TYPE CLUSTER-IP EXTERNAL-IP PORT(S) AGE service/calc-service LoadBalancer 172.20.223.154 ....ap-northeast-2.elb.amazonaws.com 8080:31922/TCP 9h service/greet-service LoadBalancer 172.20.227.106 ....ap-northeast-2.elb.amazonaws.com 8080:31787/TCP 9h

curl 명령어로 configmap 데이터와 service 간 통신이 정상적으로 이루어짐을 확인할 수 있다.

1 2 3 4 5 curl -s http://....ap-northeast-2.elb.amazonaws.com:8080/calculating Hello Calc:Hello Demo% curl -s http://....ap-northeast-2.elb.amazonaws.com:8080/greeting/1/2 3

region 서비스 배포 region 서비스는 spring k8s dependency 를 사용하지않고 RDS 서비스를 사용한다.

아래 과정을 거친다.

AWS RDS 생성

AWS ECR Repository 생성

Docker Image build & push

k8s deployment 배포

AWS RDS 생성

Aurora PostgreSQL ServlessV2 로 생성한다.

Serverless 의 경우 내부서비스이기 때문에 같은 VPC 외에서는 접속이 불가능하다.

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 26 27 28 29 30 31 public class EckDemoDatabase extends Stack { public EckDemoDatabase (final Construct scope, final String id, final StackProps props, Vpc vpc) { super (scope, id, props); SecurityGroup sgPostgre = SecurityGroup.Builder.create(this , "eks-work-sg-postgre" ) .securityGroupName("eks-work-sg-postgre" ) .vpc(vpc) .allowAllOutbound(true ) .build(); sgPostgre.addIngressRule(Peer.anyIpv4(), Port.tcp(5432 )); String databaseUsername = "mywork" ; String databasePassword = "myworkpassword" ; DatabaseCluster dbCluster = DatabaseCluster.Builder.create(this , "eks-work-db-postgre" ) .vpc(vpc) .writer(ClusterInstance.serverlessV2("eks-work-db-postgre-serverless" , ServerlessV2ClusterInstanceProps.builder().build()) ) .engine(DatabaseClusterEngine.auroraPostgres(AuroraPostgresClusterEngineProps.builder() .version(AuroraPostgresEngineVersion.VER_14_7) .build())) .credentials(Credentials.fromPassword(databaseUsername, SecretValue.plainText(databasePassword))) .securityGroups(Collections.singletonList(sgPostgre)) .serverlessV2MinCapacity(2 ) .serverlessV2MaxCapacity(4 ) .defaultDatabaseName("myworkdb" ) .build(); } }

k8s deployment

[AWS ECR Repository 생성, Docker Image build & push] 과정은 생략하고 바로 k8s deployment 배포에 대해서 설명

region 서비스는 DB 접속을 위한 ID/PW 정보를 k8s Secret 으로 저장한다.envsubst 커맨드로 민감한 정보를 문서에 저장하지 않는다.

1 2 3 4 5 6 export RDS_HOST_NAME=eckdemo.....ap-northeast-2.rds.amazonaws.com DB_URL=jdbc:postgresql://$RDS_HOST_NAME/myworkdb \ DB_PASSWORD=myworkpassword \ envsubst < k8s/eks/db-secret.yaml | \ kubectl apply -f -

1 2 3 4 export ACCOUNT_ID=$(aws sts get-caller-identity --query "Account" --output text) ECR_REGION_URL=$ACCOUNT_ID.dkr.ecr.ap-northeast-2.amazonaws.com/region-repo:1.0.0 \ envsubst < k8s/eks/region-deployment.yaml | \ kubectl apply -f -

region 서비스는 Spring k8s dependency 를 사용하지 않다 보니 env 를 사용해 설정값들을 지정한다.

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 26 27 28 29 30 31 32 33 34 35 36 37 38 39 40 41 42 43 44 45 46 47 48 49 50 51 52 53 54 55 56 57 58 59 60 61 62 63 64 65 66 67 68 69 70 71 72 73 74 apiVersion: apps/v1 kind: Deployment metadata: name: region-app labels: app: region-app spec: replicas: 2 selector: matchLabels: app: region-app template: metadata: labels: app: region-app spec: containers: - name: region-app image: ${ECR_REGION_URL} imagePullPolicy: Always ports: - containerPort: 8080 env: - name: DB_URL valueFrom: secretKeyRef: key: db-url name: db-config - name: DB_USERNAME valueFrom: secretKeyRef: key: db-username name: db-config - name: DB_PASSWORD valueFrom: secretKeyRef: key: db-password name: db-config readinessProbe: httpGet: port: 8080 path: /health initialDelaySeconds: 15 periodSeconds: 30 livenessProbe: httpGet: port: 8080 path: /health initialDelaySeconds: 30 periodSeconds: 30 resources: requests: cpu: 100m memory: 512Mi limits: cpu: 250m memory: 768Mi lifecycle: preStop: exec: command: [ "/bin/sh" , "-c" , "sleep 2" ] apiVersion: v1 kind: Service metadata: name: region-app-service spec: type: LoadBalancer selector: app: region-app ports: - protocol: TCP port: 8080 targetPort: 8080

로깅

https://docs.aws.amazon.com/ko_kr/AmazonCloudWatch/latest/monitoring/Container-Insights-setup-logs.html

Fluentd Deamonset 을 사용해 CloudWatch Logs 에 로그를 저장

namespace service-account[custlerRole] configmap daemonset

[namespace, service-account] 생성 과정은 위의 모니터링 과정과 동일하다.

configmap 생성

1 2 3 4 5 6 kubectl create configmap cluster-info -n amazon-cloudwatch \ --from-literal=cluster.name=eks-work-cluster \ --from-literal=logs.region=ap-northeast-2 # 출력 kubectl get configmap cluster-info -n amazon-cloudwatch -o yaml

아래와 같이 data 속성에 literal 등록한 2개 속성 확인

1 2 3 4 5 6 7 8 9 10 11 apiVersion: v1 data: cluster.name: eks-work-cluster logs.region: ap-northeast-2 kind: ConfigMap metadata: creationTimestamp: "2023-06-24T13:16:06Z" name: cluster-info namespace: amazon-cloudwatch resourceVersion: "493112" uid: c53e7cf8-49cc-44c7-b891-6ddd25c6b459

fluentd DaemonSet 생성

1 curl -O https://raw.githubusercontent.com/aws-samples/amazon-cloudwatch-container-insights/latest/k8s-deployment-manifest-templates/deployment-mode/daemonset/container-insights-monitoring/fluentd/fluentd.yaml

fluentd.yaml 파일에는 DaemonSet 설정 뿐 아니라 [ServiceAccount, ClusterRole, ConfigMap] 리소스가 설정된다.ConfigMap 안에 fluentd 사용을 위한 XML 형식의 conf 가 들어가 있다.

1 2 3 4 5 6 kubectl apply -f fluentd.yaml # serviceaccount/fluentd created # clusterrole.rbac.authorization.k8s.io/fluentd-role created # clusterrolebinding.rbac.authorization.k8s.io/fluentd-role-binding created # configmap/fluentd-config created # daemonset.apps/fluentd-cloudwatch created

AWS Console 에서 아래 경로를 따라 이동하면

1 CloudWatch > 로그 그룹 > /aws/containerinsights/eks-work-cluster/application

지금까지 만들었던 [greet, calc, region] 서비스에 대한 로그들이 생성되어 있다.

데모 코드

https://github.com/Kouzie/eks-cdk-demo https://github.com/Kouzie/spring-kube-demo